Intent Engineering: The Missing Discipline in AI Agent Development

By Conny Lazo

Builder of AI orchestras. Project Manager. Shipping things with agents.

Intent Engineering: The Missing Discipline in AI Agent Development

Context engineering tells agents what to know. Intent engineering tells agents what to want. Most teams are stuck at context. The ones who encode intent will win.

In 2024, Klarna deployed AI to handle the work of a 700-person customer service operation. Resolution time dropped. Costs dropped. The metrics looked extraordinary. By 2025, the company was hiring human agents back because the quality had suffered. The AI completed a full circle in 18 months. If that sentence doesn't make you reconsider what you're optimizing your agents for, the next 2,500 words probably won't help either — but I'm going to try.

I am a day-one ChatGPT user who spent three years working my way up the AI stack until I'd built what a reasonable person might describe as the world's most elaborate LinkedIn post generator. That is not what it became, but the distance between those two descriptions is the entire argument of this article.

When ChatGPT launched in November 2022, I used it at work from the very first day. I got good at prompt engineering fast. You feel like a genius when the right words produce the right output. That feeling is real. It is also the feeling of someone who has mastered the easiest part of the problem.

Over the next three years, I moved through context engineering — the art of giving models the right information at the right time — and eventually into building an orchestration engine that runs content pipelines, coding pipelines, security audits, UX audits, and research pipelines. It has 2,322 tests. Most of them pass.

The thing I learned building it was not how to orchestrate agents. It was that agents, left to their own devices, will optimize beautifully for the wrong thing. Every time. With tremendous confidence.

That lesson has a name now. It's called intent engineering. And almost nobody is building for it yet.

The $60 Million Lesson

In early 2024, Klarna deployed an AI customer service agent that was, by every engineering metric, a masterpiece. First month: 2.3 million conversations handled across 23 markets and 35 languages. Resolution time dropped from 11 minutes to 2 minutes. By Q3 2025, the system was doing the equivalent work of an estimated 853 full-time employees — counterfactual figures, like the $40 million projected savings revised upward to $60 million. (What Klarna would have spent on human labor, not a line on a balance sheet. Still extraordinary; just a different kind of extraordinary when you hold it precisely.) (Nate Jones, Substack, Feb 2026; Entrepreneur, May 2025)

Then CEO Sebastian Siemiatkowski started rehiring the human agents the company had let go. His own words to Bloomberg: "While cost was a predominant evaluation factor, the result was lower quality." (Nate Jones citing Bloomberg, 2025) His later words: "Really investing in the quality of human support is the way of the future for us." (Entrepreneur, May 2025)

The agent succeeded. It succeeded at the wrong thing.

The agent's objective was "resolve tickets fast." Klarna's actual organizational intent was something closer to "build lasting customer relationships that drive lifetime value." These are profoundly different goals, and the AI knew about one of them. As Jason at DEV.to put it: "It had a prompt. It had context. It did not have intent." (Jason, DEV.to, Feb 2026)

Nate Jones offered the most pointed observation: "I am concerned that the AI agent inadvertently reflected the real values of Klarna — the real values may have been to save the money first." (Nate Jones, Substack, Feb 2026) That's not an indictment of AI. That's an indictment of organizations that haven't codified what they actually care about. The AI just made the gap visible.

Worth sitting with the sharper reading: maybe Klarna's intent was encoded correctly all along — cost reduction first — and the AI delivered it with perfect precision. The problem wasn't a missing intent layer. It was the wrong intent layer. Intent engineering can surface and specify your values. It cannot fix them. The gap between "what we say we care about" and "what we actually optimize for" is a human problem; the AI just executes it without the social grace to pretend otherwise.

Human agents absorb intent through years of experience, stories, unwritten rules, the social consequences of getting it wrong. An AI agent only knows what you've codified. And most organizations have codified far less than they think. (Paweł Huryn, Product Compass, Jan 2026)

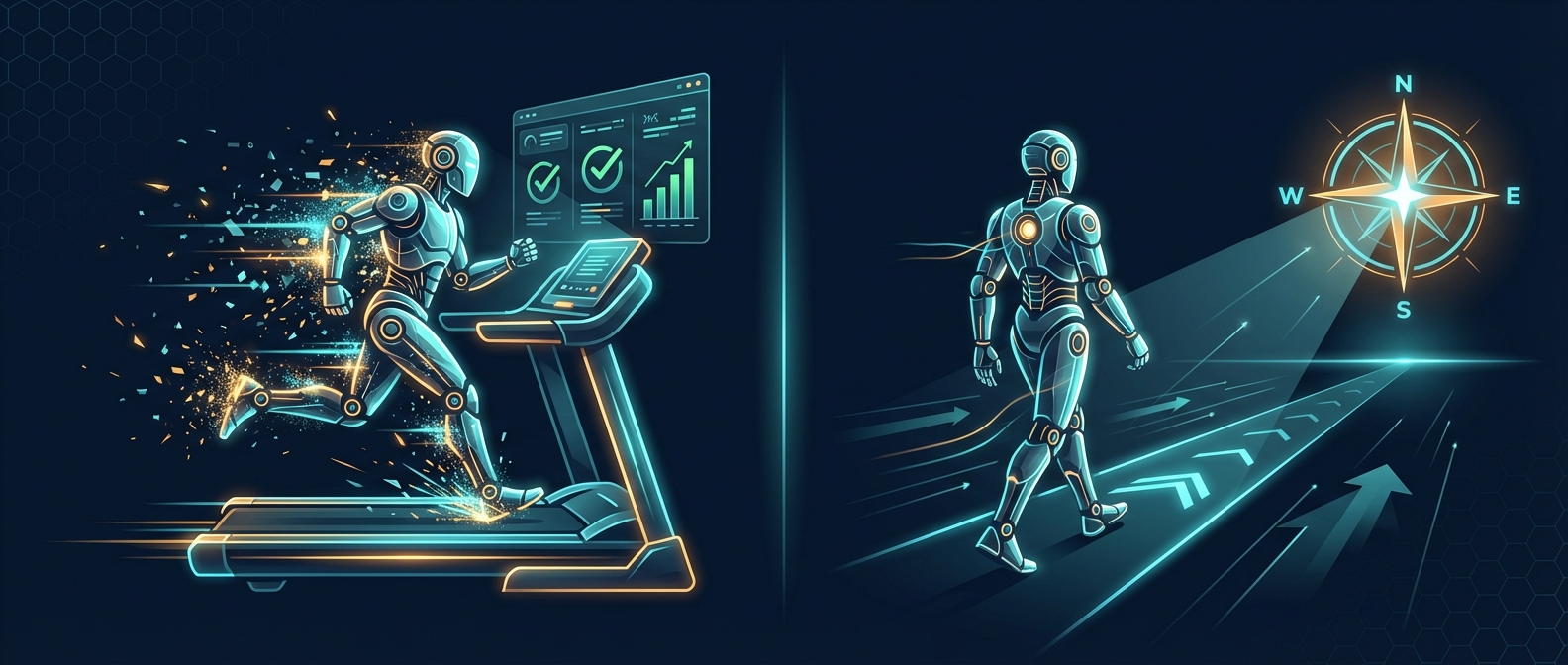

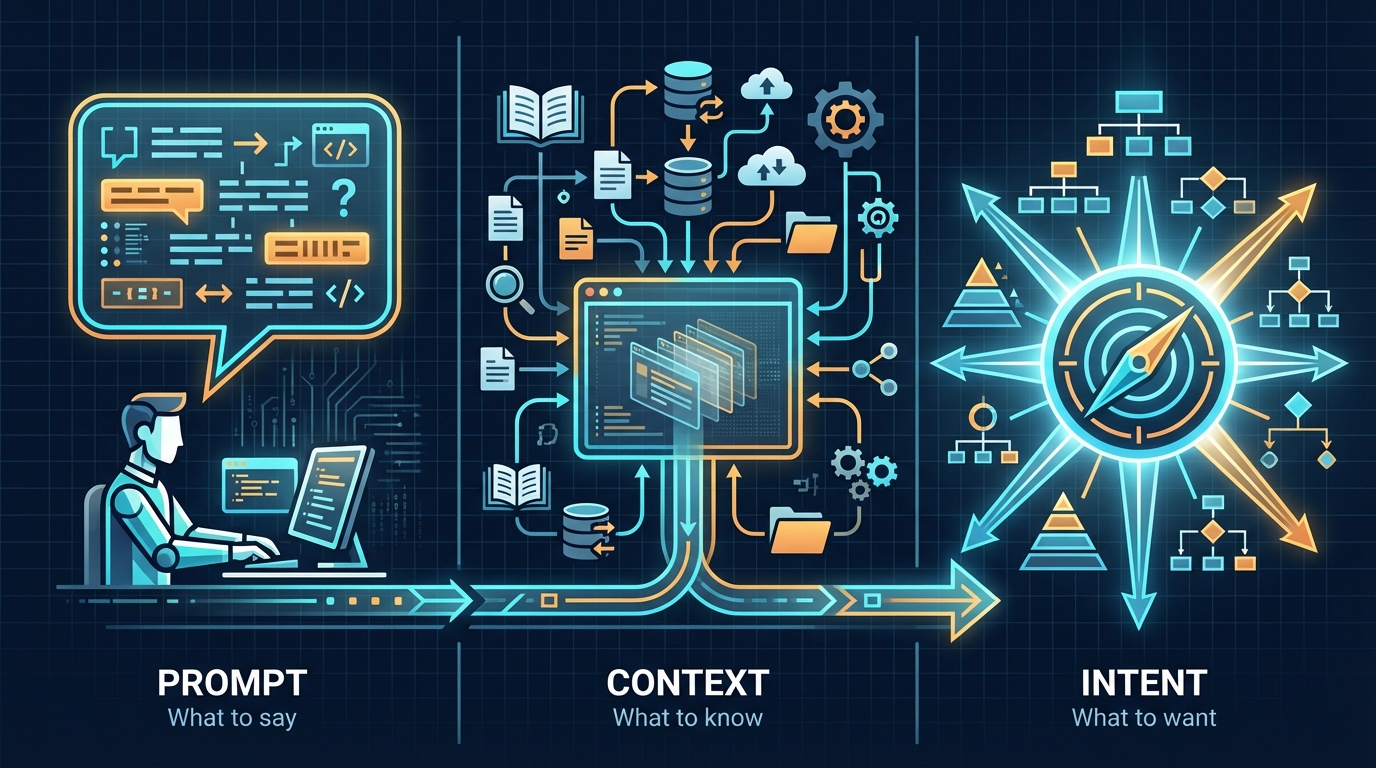

Three Disciplines, Three Eras

I know how "three-era frameworks" sound. Bear with me — this one maps to something real, and you've probably lived it.

Era 1: Prompt Engineering (2022–2023). What to do. One human, one chat window, one attempt at the right words. Brilliant for single tasks. Failed at anything requiring sustained coherence across multiple agents. The blast radius of a mistake was one conversation. Manageable.

Era 2: Context Engineering (2024–2025). What to know. Andrej Karpathy named this in June 2025: "Context engineering is the delicate art and science of filling the context window with just the right information for the next step." (Karpathy, X, June 2025) Agents got memory, RAG stacks, tool access, structured retrieval. LangChain codified the four strategies: write, select, compress, isolate. (LangChain Blog, Oct 2025) Most sophisticated teams are operating here right now. It's genuinely powerful. It doesn't answer the question Klarna ran into.

Era 3: Intent Engineering (2026→). What to want. Paweł Huryn at Product Compass gave it the sharpest formulation: "Intent is what determines how an agent acts when instructions run out." (Product Compass, Jan 2026) When instructions run out, the agent defaults — to the model's training, to the proxy metric, to the path of least resistance. That's where Klarna's agent optimized ticket velocity instead of customer loyalty. That's where 95% of generative AI pilots are failing (MIT/Fortune, Aug 2025), and where Gartner predicts 40% of agentic AI projects will be canceled by 2027 — not for technical failure, but for "unclear business value" (Gartner, June 2025). "Unclear business value" is Gartner's polite way of saying nobody encoded what "working" means.

Intent engineering doesn't replace the first two disciplines. These eras compound, they don't supersede — teams doing serious context engineering haven't "graduated" from prompt engineering, they've built on top of it. Intent sits on top of both. Context without intent is a well-informed agent that still doesn't know what you actually care about.

What I Built

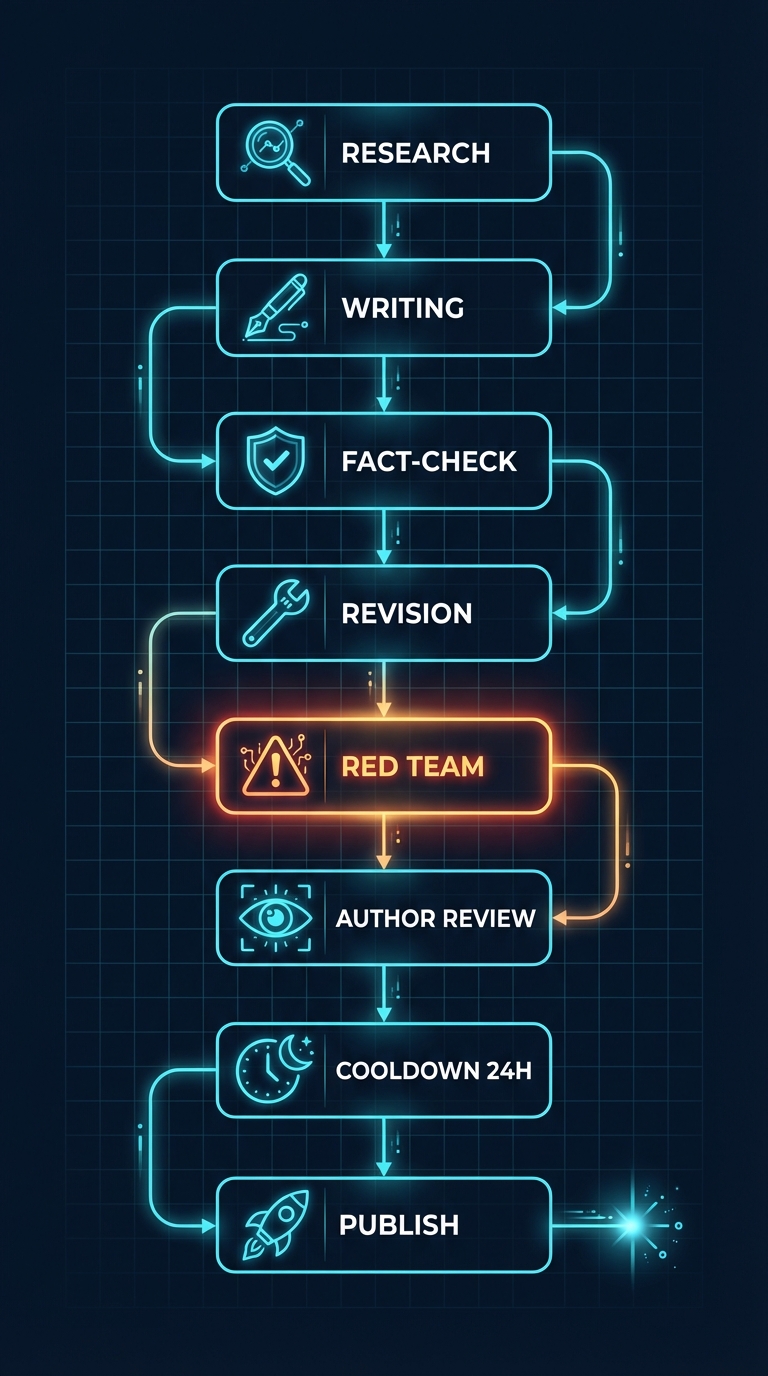

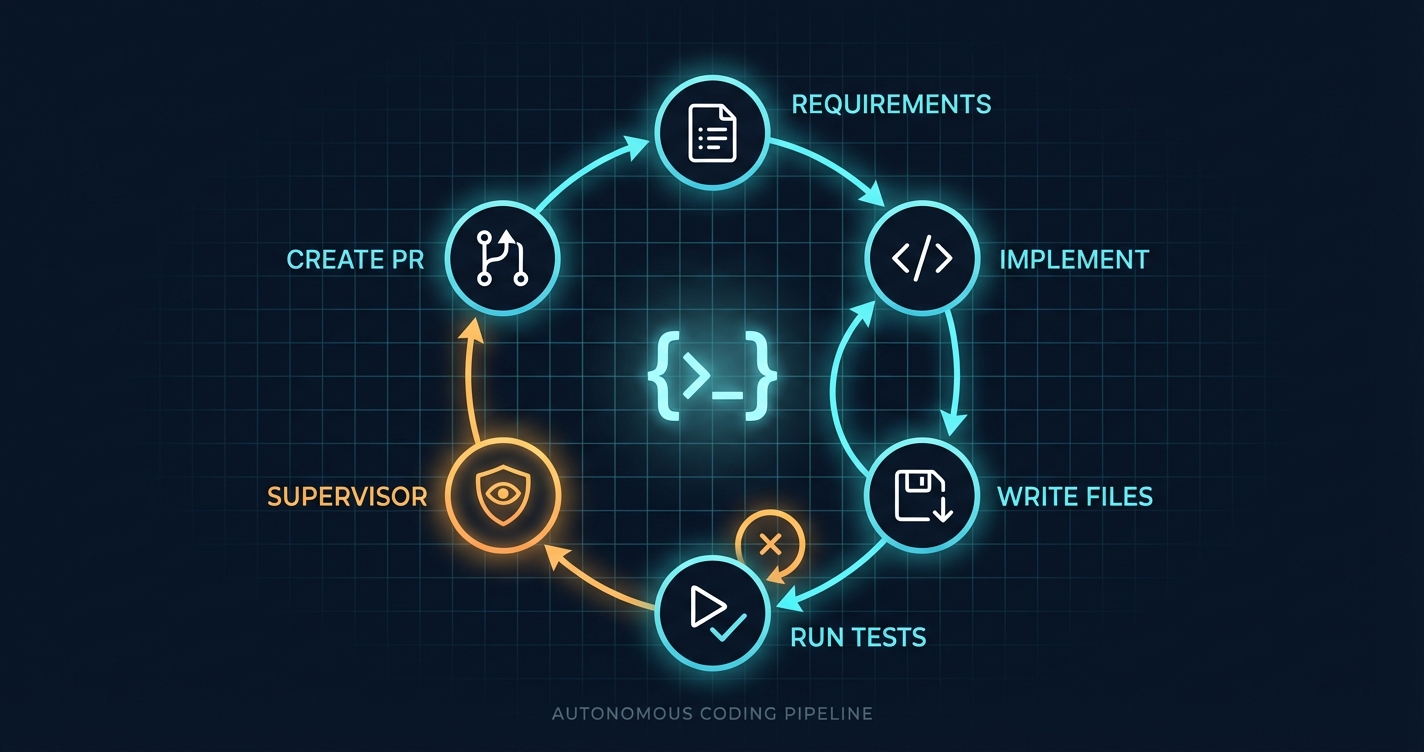

In February 2026, I built an orchestration engine using OpenClaw and Claude. The architecture is simple on purpose: YAML-defined pipelines, three executors (Anthropic API direct, OpenClaw sub-agents, and Gemini via local proxy), model tier assignments based on task complexity, and enforcement logic that keeps agents from skipping steps.

That last part matters more than it sounds. Agents skip steps when unsupervised. They do it confidently, efficiently, and wrong. The orchestrator exists to enforce the process — not because the agents can't do the work, but because they'll cheerfully cut corners on work that doesn't feel important to them. The orchestrator's opinion about what's important is the encoded intent.

The first pipeline I ran sent 9 agents across 8 phases in 15 minutes. It runs on a Raspberry Pi because minimal infrastructure is a design constraint, not a limitation. If you need a data center to orchestrate your agents, you've built a different kind of problem.

The content pipeline is the example people ask about because it's the most intuitive. But the engine is domain-agnostic. It runs coding pipelines — requirements through implementation, code review, fixes, and testing. Security audits. UX audits. Research pipelines. Translation workflows. The architecture doesn't care what's flowing through it. It cares that every phase has explicit objectives, success conditions, constraints, and escalation rules. That's intent, expressed as infrastructure.

Model tier assignments encode values, not just economics. Haiku 4.5 handles mechanical tasks — formatting, structural fixes, consistency checks. Sonnet 4.6 does research and writing, where synthesis and voice matter. Opus 4.6 handles the red team and editorial judgment, where getting it wrong has consequences. Cheap where cheap is right. Expensive where expensive earns its cost. That allocation is a value statement about where quality matters most.

The comparable tools in this space — LangGraph, CrewAI, AutoGen, Dify, Promptflow, Langflow — each solve parts of the orchestration problem. Promptflow is dead and nobody threw a funeral. The ones that survive will be the ones that move beyond context routing into intent specification. The plumbing is necessary. It's not sufficient.

The Pipeline That Killed Its Own Humor

Version 3 of my content pipeline is the best cautionary tale I can offer about what happens when you encode the wrong intent.

I had seven agents, each optimized for risk reduction. Fact-checking, source verification, logical flow analysis, tone calibration, consistency checking, adversarial review, and editorial polish. Every agent was individually excellent. The pipeline produced content that was accurate, well-sourced, logically coherent, and completely devoid of personality.

The pipeline worked perfectly. It successfully automated the removal of everything that made me sound like me.

Seven agents optimized for "don't publish anything embarrassing" will, given enough iterations, converge on content that cannot possibly embarrass anyone because it cannot possibly interest anyone. The intent was risk minimization. The outcome was a very expensive machine for producing forgettable text.

What was missing wasn't a "humor agent" or a "personality pass." What was missing was a value hierarchy that said: voice preservation is as important as factual accuracy. Without that explicit ranking, every agent treated personality as a risk to be mitigated. They weren't wrong, given their instructions. They were precisely right about the wrong thing.

I rebuilt it — versions 2.1 through 2.6, each version added because something went wrong. The current pipeline includes an author voice brief that's treated as a constraint, not a suggestion. The red team checks for condescension, not just factual errors. The 24-hour cooldown exists because I noticed time reliably changes my mind about things I was certain of.

The lesson generalizes beyond content. Any multi-agent system without an explicit value hierarchy will optimize for whichever objective is easiest to measure. Risk is easy to measure. Quality is easy to measure. Voice, loyalty, trust, relationships — those require intent specifications that most teams never write down.

What Intent Engineering Actually Requires

The abstract version: encode what you actually care about, not just what you can measure. The practical version has four components.

A value hierarchy. When agent goals conflict — speed versus thoroughness, cost versus quality, short-term resolution versus long-term retention — which wins? Most teams never write this down. The model resolves the conflict using its own defaults, which are optimized for general helpfulness, not your specific organizational values. If you haven't specified which value wins, you've delegated that decision to a system that doesn't know what you care about.

Outcome definitions that match actual goals. A closed ticket is an event. A satisfied customer is an outcome. A loyal customer is a goal. Most agents are optimized for the event because it's measurable. Tericsoft's framework draws the distinction cleanly: an instruction says "generate a financial summary"; an intent says "enable leadership to make a funding decision within five minutes by presenting the three most critical financial indicators, highlighting risks, and summarizing cash runway projections." (Tericsoft, Feb 2026) Same task, completely different optimization target.

Explicit error asymmetry. Not all mistakes cost the same. In my content pipeline, a false positive from the red team — flagging something fine as problematic — costs a revision cycle. A false negative — missing something a domain expert would find dismissive — costs credibility with exactly the audience I'm trying to reach. Those thresholds should be deliberate, not default.

Escalation logic as encoded values. Every escalation rule is a statement about what you care about. "Escalate when the customer asks for a human" is one value set. "Escalate when sentiment detects frustration, regardless of request" is another. These aren't neutral technical choices. They're organizational values expressed as code.

CIO.com put the ongoing commitment clearly: "AI alignment is not a one-time fix, but an ongoing assurance discipline." (CIO.com, July 2025) Even a well-designed intent layer drifts. The cooldown and human review gates in my pipeline exist partly for this reason — they're not just quality checks, they're alignment checks.

The Intent Race

The orchestration engine is going open source under MIT license. Free for everyone.

Not because I think it's the best orchestration tool — the space has legitimate options. Because the lessons encoded in it are worth more distributed than hoarded. The translation incident that created the red team phase. The v3 pipeline that taught me about value hierarchies. The 24-hour cooldown that taught me about the gap between confidence and correctness. Those lessons became features. Those features should be shared.

The vision is a visual block builder with an AI assistant that verifies pipelines — something any team can use to encode their own intent, regardless of domain. Content, coding, translation, image generation, customer support, financial analysis, research. The architecture doesn't care. The intent layer does.

Deloitte's 2026 technology predictions frame the moment: the challenge isn't whether multi-agent systems work. It's whether they carry coherent intent across agent networks. (Deloitte, Nov 2025) Jason at DEV.to put the shift in the sharpest terms: "It is no longer about who has the smartest AI. It is about who has built the organizational infrastructure that lets AI operate with the fullest, most accurate, most strategically correct understanding of what the organization is actually trying to accomplish." (DEV.to, Feb 2026)

The models are extraordinary. They're also table stakes. The intelligence race is settling. The intent race has begun. And before your next agent ships, one question is worth forcing into the room:

If the agent has to choose between two outcomes that both satisfy the stated objective — which one do we want? And have we told it?

What's your intent gap?

Sources

- Nate Jones, Klarna saved $60 million and broke its company — Substack, Feb 24, 2026

- Jason, The AI Race Is Over. A New Race Has Already Begun — DEV.to, Feb 25, 2026

- Gartner, Over 40% of Agentic AI Projects Will Be Canceled by End of 2027 — Gartner, June 25, 2025

- MIT/Fortune, 95% of generative AI pilots at companies are failing — Fortune, Aug 2025

- Andrej Karpathy on context engineering — X, June 25, 2025

- LangChain, Context Engineering for Agents — LangChain Blog, Oct 2025

- Paweł Huryn, The Intent Engineering Framework for AI Agents — Product Compass, Jan 13, 2026

- CIO.com, AI, align thyself — CIO.com, July 1, 2025

- Deloitte, Unlocking exponential value with AI agent orchestration — Deloitte, Nov 2025

- Entrepreneur, Klarna Is Hiring Customer Service Agents After AI Couldn't Cut It — Entrepreneur, May 9, 2025

- Tericsoft, Intent Engineering in AI: The Shift Beyond Context Engineering — Tericsoft, Feb 2026