Twenty Minutes Apart, Two Futures: Why I Chose Coordination Over Delegation

By Conny Lazo

Builder of AI orchestras. Project Manager. Shipping things with agents.

Two visions of the future shipped within minutes of each other.

OpenAI shipped Codex 5.3 on a February afternoon. Anthropic followed with Claude Opus 4.6 almost simultaneously. Same day, same hour, completely different answers to the same question: what should AI agents actually do for you?

Based on what I've observed from articles, benchmarks, and hands-on experimentation, these two approaches serve fundamentally different purposes. One lets you delegate and walk away. The other lets you coordinate and stay involved.

I have a preference for multi-agent orchestration — for now. But this space changes constantly, and what works today may look different tomorrow as models evolve and costs drop. Here's how I think about the choice.

The Great Divide

Codex says: "Hand me the task, go get coffee for three hours, come back to finished work." It's the brilliant employee who disappears into a quiet room and emerges with perfect code.

Claude says: "Let's work together across all your tools, with multiple agents talking to each other, handling everything from legal memos to marketing copy." It's the coordinated team that plugs into your existing workflow.

Both approaches work. They're solving fundamentally different problems.

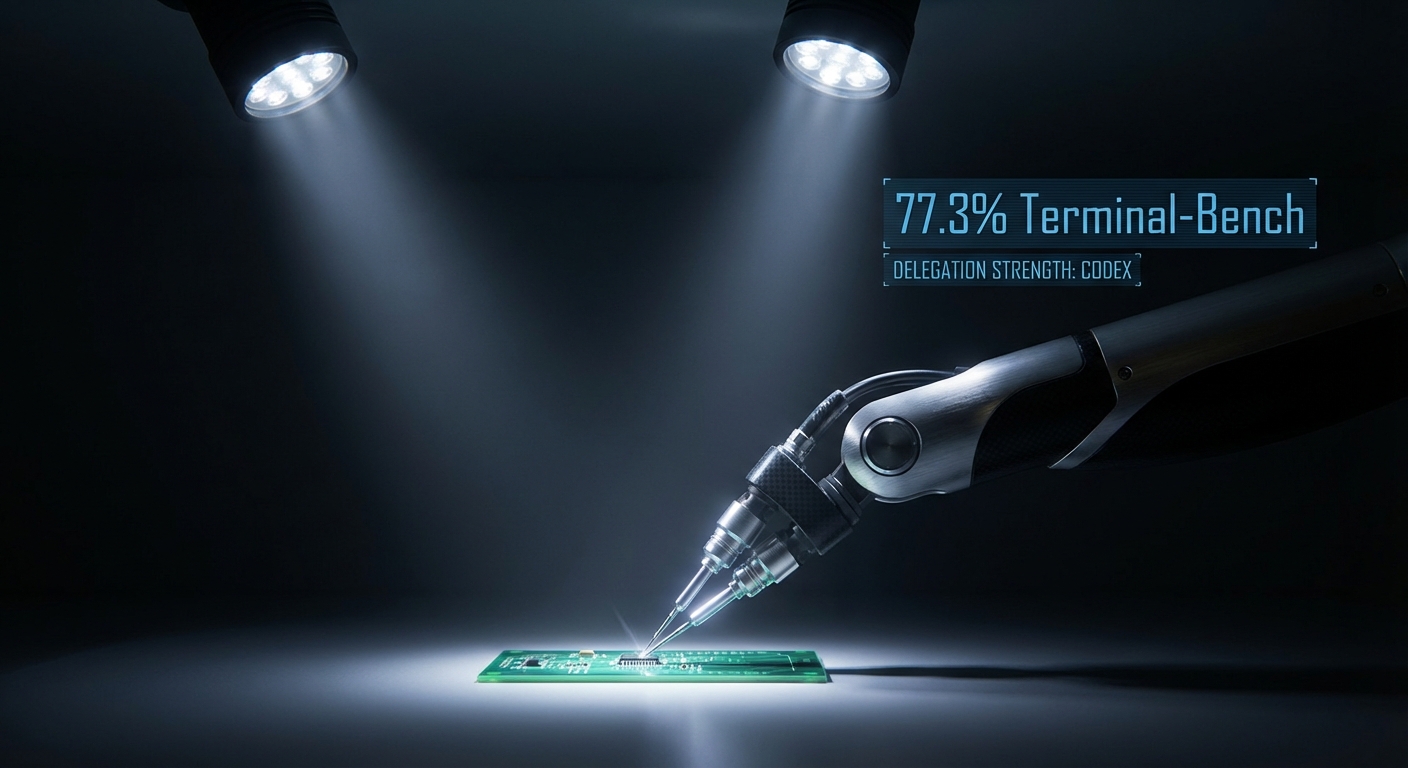

The numbers tell part of the story. Codex dominates Terminal-Bench 2.0 with 77.3% versus Claude's 65.4%. That's a 12-point gap on tasks where your engineering team estimates two sprint days of work. Codex will handle that overnight, correctly, on the first try.

But correctness isn't everything.

Why I Lean Toward Orchestration

My experiments with multi-agent coordination have been promising. Running multiple specialized agents — each handling a different part of a workflow — produces results that feel qualitatively different from single-agent delegation.

The economics are interesting too. Community analysis suggests subscriptions run up to 36x cheaper than API calls for heavy orchestration users. But the real value isn't cost — it's workflow integration.

The work I find most valuable crosses boundaries. Content creation that chains research into writing into fact-checking into distribution. Data analysis that pulls from multiple sources, cross-references findings, and generates structured reports. Translation systems where context flows between analysis, translation, and quality review.

Claude's MCP integration lets agents work inside existing tools — committing to repositories, updating project trackers, routing notifications. That's coordination in practice, not just in theory.

But I want to be clear: this is where the space is right now. We optimize for token usage today; tomorrow costs may drop so dramatically that the calculus changes entirely. Best practices in AI are a moving target.

Codex Deserves Credit

I'm not dismissing delegation. Codex 5.3 is genuinely impressive.

OpenAI used earlier versions of the model to debug training code and optimize infrastructure during its own development. When the CEO of the company that made ChatGPT calls a different product "the most loved internal product we've ever had," that means something.

The three-layer architecture—orchestrator, executors, recovery layer—produces work you can trust without reviewing every line. For complex, self-contained technical challenges, that correctness optimization changes how you plan sprint capacity.

Codex earned the first "high capability cybersecurity classification" from red team evaluators on OpenAI's preparedness framework. While OpenAI notes they don't have definitive evidence it can fully automate cyber operations end-to-end, the classification triggered additional safety protocols before release. That level of autonomous capability is unprecedented for a commercially available model.

If your highest-value work fits the delegation pattern—deep, technical, self-contained problems—Codex is the better bet.

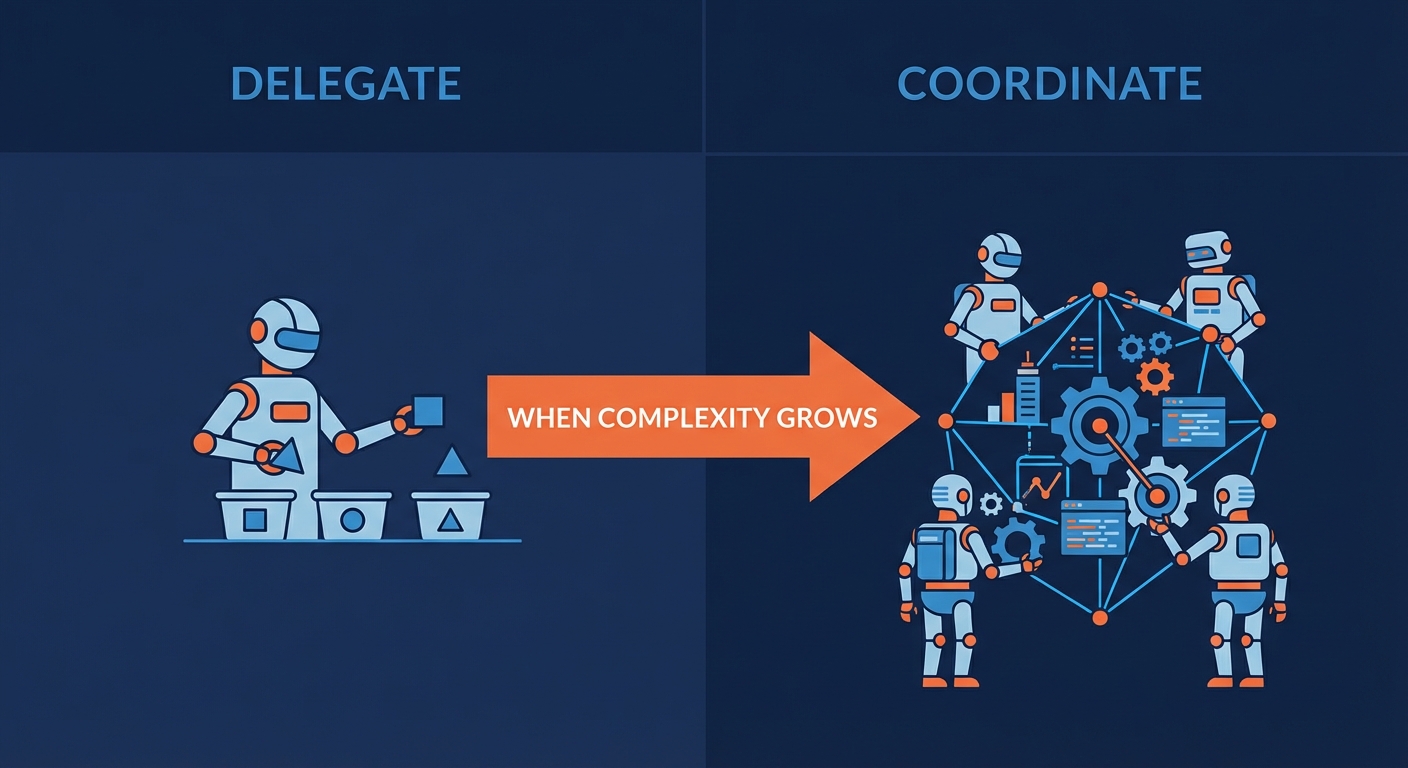

The Framework That Matters

The choice isn't which model benchmarks higher. It's which problem shape you're solving:

Delegation-shaped problems: Complex, self-contained work that benefits from isolation. Refactoring a payment processing module. Analyzing 200 vendor contracts for non-standard terms. Building a feature that touches a dozen files but has clear requirements.

Coordination-shaped problems: Work that spans tools, requires interdependence, benefits from human-in-the-loop oversight. Quarterly closes that pull actuals from accounting, compare against forecasts in sheets, and draft variance explanations in docs. Product launches where press releases, landing pages, email sequences, and social posts all need to align and reference each other.

Most organizations need both. The skill isn't picking the right tool once—it's knowing which pattern fits each task.

For my current projects, coordination fits better. Multi-source data pipelines, content workflows with built-in quality gates, translation systems that need consistency across chapters — these are coordination-shaped problems.

But when the task is a deep, self-contained technical challenge that requires sustained accuracy, delegation patterns make more sense. The smart approach isn't loyalty to one paradigm — it's reading the shape of the problem.

What Coordination Looks Like in Practice

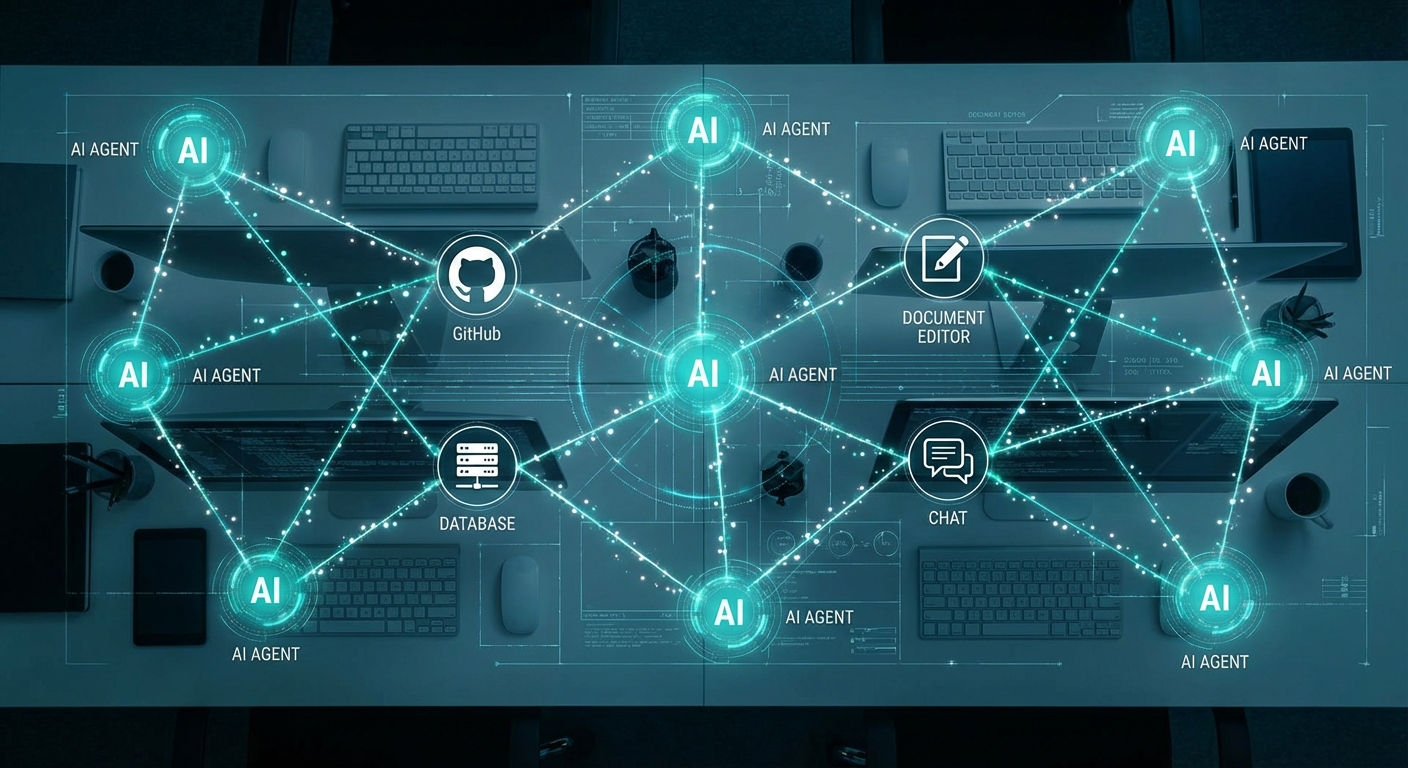

The architecture behind multi-agent orchestration is surprisingly minimal. A few core tools — read, write, edit, bash — connected through MCP to everything else: GitHub, databases, cloud storage, project trackers.

Where delegation works in isolated environments and hands back results, coordination embeds agents into existing infrastructure. A content pipeline where one agent researches, another writes, a third fact-checks — each working in the same repository, each aware of what the others produced. The output lands where it belongs in the workflow, not in a separate handoff.

As workflows grow more complex, this integration compounds in value. But it also adds complexity. The honest truth is that orchestration systems require more upfront architecture than delegation patterns. Whether that investment pays off depends on your specific use case.

Which Approach Ages Better?

Codex bets that individual agents will become so capable that coordination becomes unnecessary. If one agent can handle an entire system end-to-end, agent-to-agent communication is just overhead.

Claude bets that valuable work stays fundamentally interdependent. Building products isn't just building frontend and backend separately and hoping they fit together. It's managing the edge cases, the dependencies, the human judgment calls that emerge when pieces interact.

Right now, I lean toward interdependence. Not because individual agents won't get smarter — they absolutely will — but because the most valuable problems today involve human judgment about priorities, tradeoffs, and edge cases that emerge from complex systems.

The network effects support this direction. Every new MCP integration expands what coordination can reach. But models are evolving so fast that today's best practice becomes tomorrow's legacy approach. The real skill isn't committing to one vision — it's staying adaptable as both approaches mature.

What This Means for Builders

If you're building your first AI workflow, ask three questions:

-

Can you tolerate errors in initial output, or is correctness non-negotiable? Codex's architecture optimizes for getting complex technical challenges right on the first try. Claude optimizes for iteration and coordination.

-

Does the work live in one environment or span multiple tools? Most knowledge work crosses boundaries. Choose your architecture accordingly.

-

Is the work independent or interdependent? Five separate contract reviews versus a product launch where every piece needs to reference the others.

The answer shapes which organizational muscle you want to build: delegation or coordination.

I'm building coordination muscle because my current highest-leverage work spans multiple tools and requires interdependence. The subscription economics make experimentation practical. The integration patterns fit how knowledge work actually flows.

But I keep delegation patterns in the toolkit for when the problem calls for sustained, autonomous accuracy on self-contained challenges. The space is moving too fast to be dogmatic about either approach.

The Future Shipped Simultaneously

Two visions shipped minutes apart. Both work. Both solve real problems. The question isn't which will win—it's which organizational muscle you want to develop.

I lean toward orchestration because today's most valuable problems are about coordination, not just capability. But this is a moment in time, not a permanent verdict. Models evolve. Costs drop. Workflows that seem optimal today will be reinvented tomorrow.

The agents are here. Both approaches work. The skill isn't picking a winner — it's matching the tool to the problem and staying ready to adapt when the landscape shifts again.

Build for today. Architect for change.

Sources & Inspiration

- OpenAI Codex 5.3 Announcement — Official release details, Terminal-Bench 2.0 scores, and cybersecurity classification

- Anthropic Claude Opus 4.6 & Agent Teams — MCP integration, agent coordination architecture, and peer-to-peer communication model

- Terminal-Bench 2.0 Results — Independent benchmark verification: Codex 77.3% vs Claude 65.4%

- Reddit r/ClaudeAI — Subscription vs API Cost Analysis — Community analysis showing subscriptions up to 36x cheaper for heavy orchestration users

- Sam Altman on Codex — Called Codex "the most loved internal product we've ever had"; confirmed first model to help build itself

- Model Context Protocol (MCP) — GitHub — Open standard for AI-tool integration that enables coordination patterns