The Dark Factory Gap: Why 90% of Developers Are Getting Slower While 3-Person Teams Ship Faster

By Conny Lazo

Builder of AI orchestras. Project Manager. Shipping things with agents.

Three people at StrongDM are shipping production software faster than most 50-person engineering teams.

They don't write code. They don't review code. They write specifications, and AI agents handle everything else—implementation, testing, deployment. Their metric, according to StrongDM's own reported benchmarks? "$1,000 per engineer per day in tokens, or your factory has room for improvement."

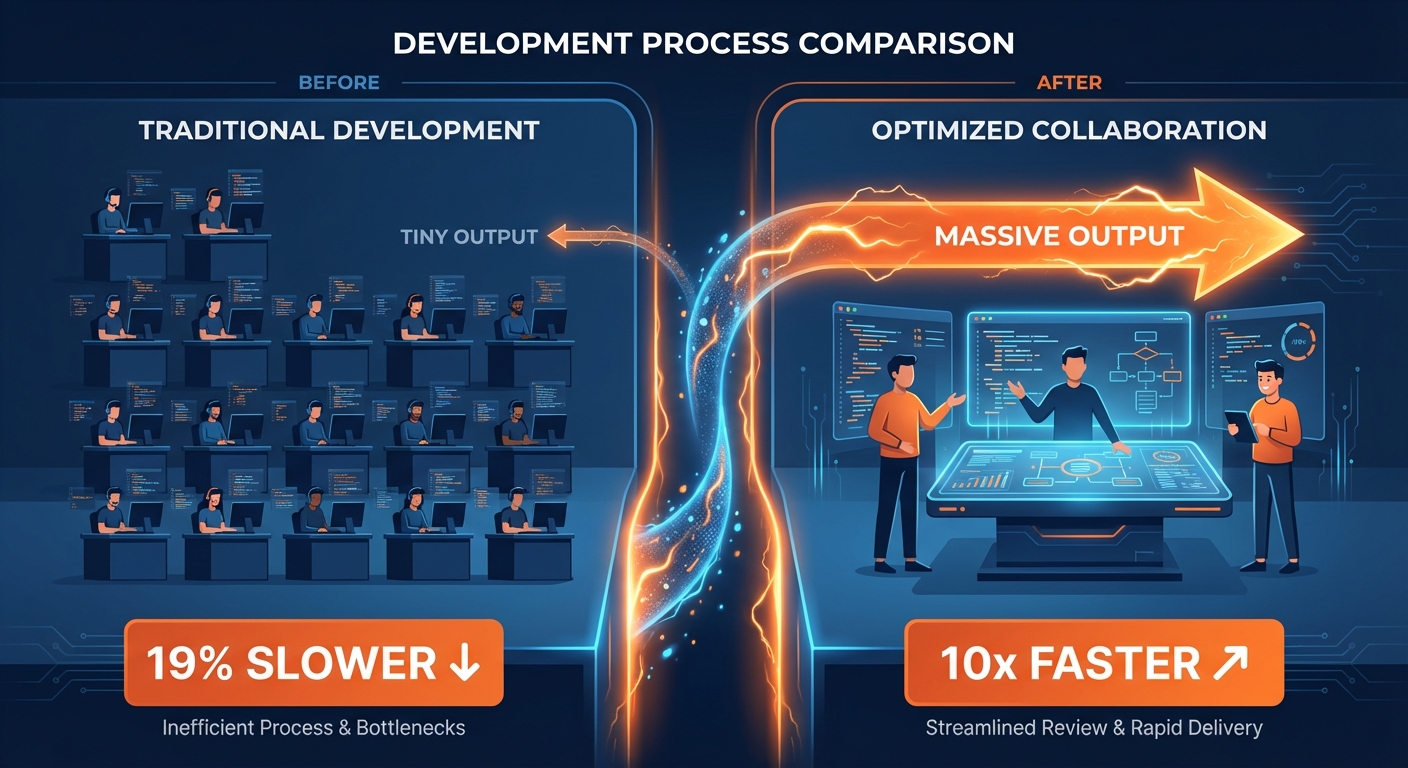

Meanwhile, a rigorous randomized control trial by METR found that experienced developers using AI tools took 19% longer to complete tasks than developers working without them. The kicker? Those developers believed AI had made them 24% faster.

Both stories are true. And the gap between them explains everything happening in software right now.

These two findings aren't contradictory — they're measuring different things in different contexts. METR studied experienced individual developers bolting AI tools onto their existing workflows; their 19% slowdown reflects the real productivity dip when you layer new tools on top of old processes. StrongDM isn't bolting anything on — they redesigned the entire workflow from scratch around AI as the primary implementer, with humans writing specifications rather than code. Different measurement approaches, different team structures, different tasks. The METR finding is a warning about transition costs; StrongDM is showing what's possible after you've crossed through that transition.

The Framework Nobody's Talking About

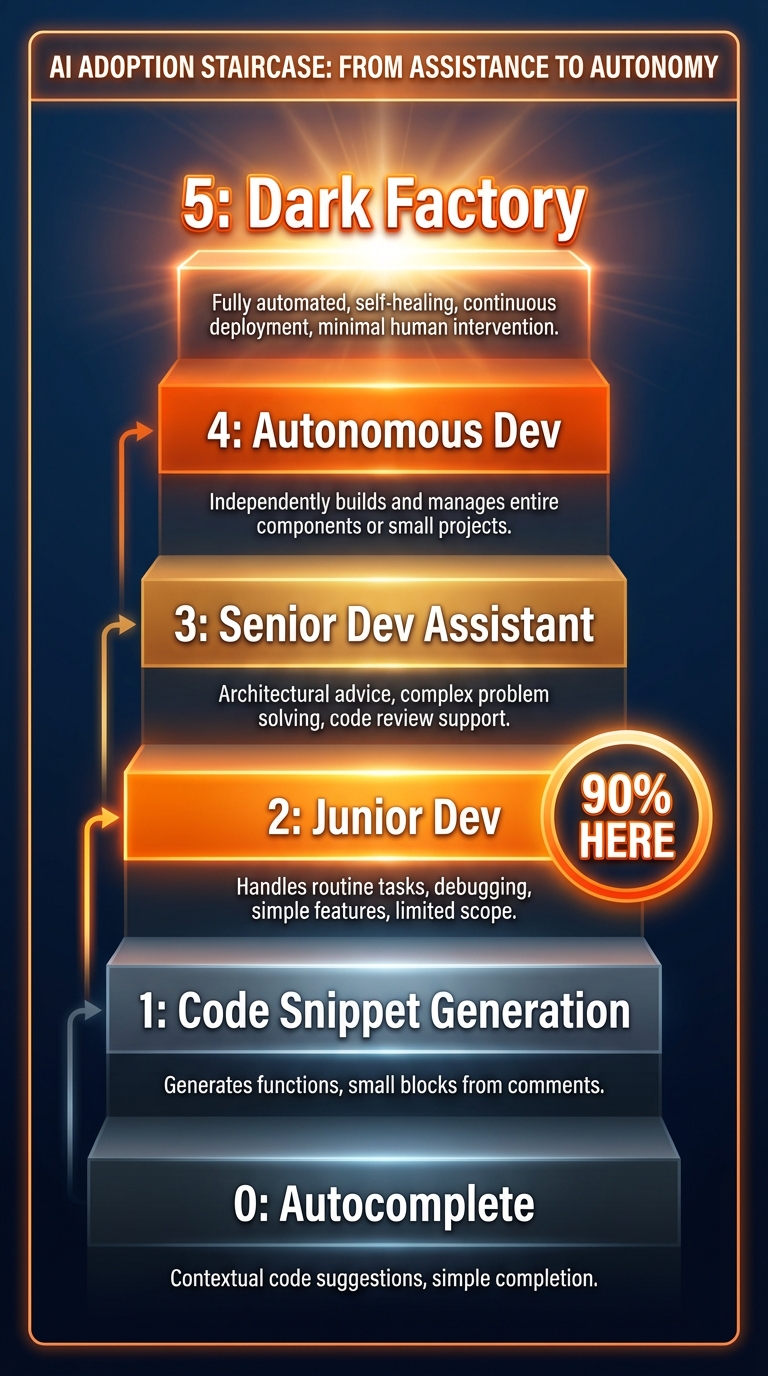

Dan Shapiro cut through the AI hype with a framework that maps exactly where every developer actually stands. He calls it the Five Levels of Vibe Coding, and the name is deliberately informal because the underlying reality matters more than the marketing.

Level 0: Spicy Autocomplete — GitHub Copilot tab-complete. You type, AI suggests the next line. Faster fingers, same job.

Level 1: Coding Intern — Hand AI discrete tasks. "Write a unit test." "Add a docstring." You review everything. Minor speedup.

Level 2: Junior Developer — AI handles multifile changes, understands dependencies. You're reviewing more complicated output but still reading all the code. 90% of "AI-native" developers are stuck here.

Level 3: Developer as Manager — You stop writing code entirely. You direct AI agents and review pull requests at the feature level. Most developers top out here because letting go of the code is psychologically difficult.

Level 4: Developer as PM — Write a specification, leave for hours, check if tests pass. Code becomes a black box. You care if it works, not how it's written.

Level 5: The Dark Factory — Specification goes in, working software comes out. No human writes OR reviews code. Named after manufacturing facilities where robots work in the dark because robots don't need lights.

The gap between Level 2 (where most people live) and Level 5 (where StrongDM operates) isn't bridged by better tools. It's bridged by completely redesigning how software gets built.

What Level 5 Actually Looks Like

StrongDM's Software Factory isn't theoretical. It's been running since July 2025, when Justin McCarthy, Jay Taylor, and Navan Chauhan decided to test a simple question: how far can we get without writing any code by hand?

Pretty far, it turns out.

Their output is CXDB—16,000 lines of Rust, 9,500 lines of Go, 700 lines of TypeScript. Real software, in production, serving real users. Built entirely by AI agents orchestrated by markdown specifications.

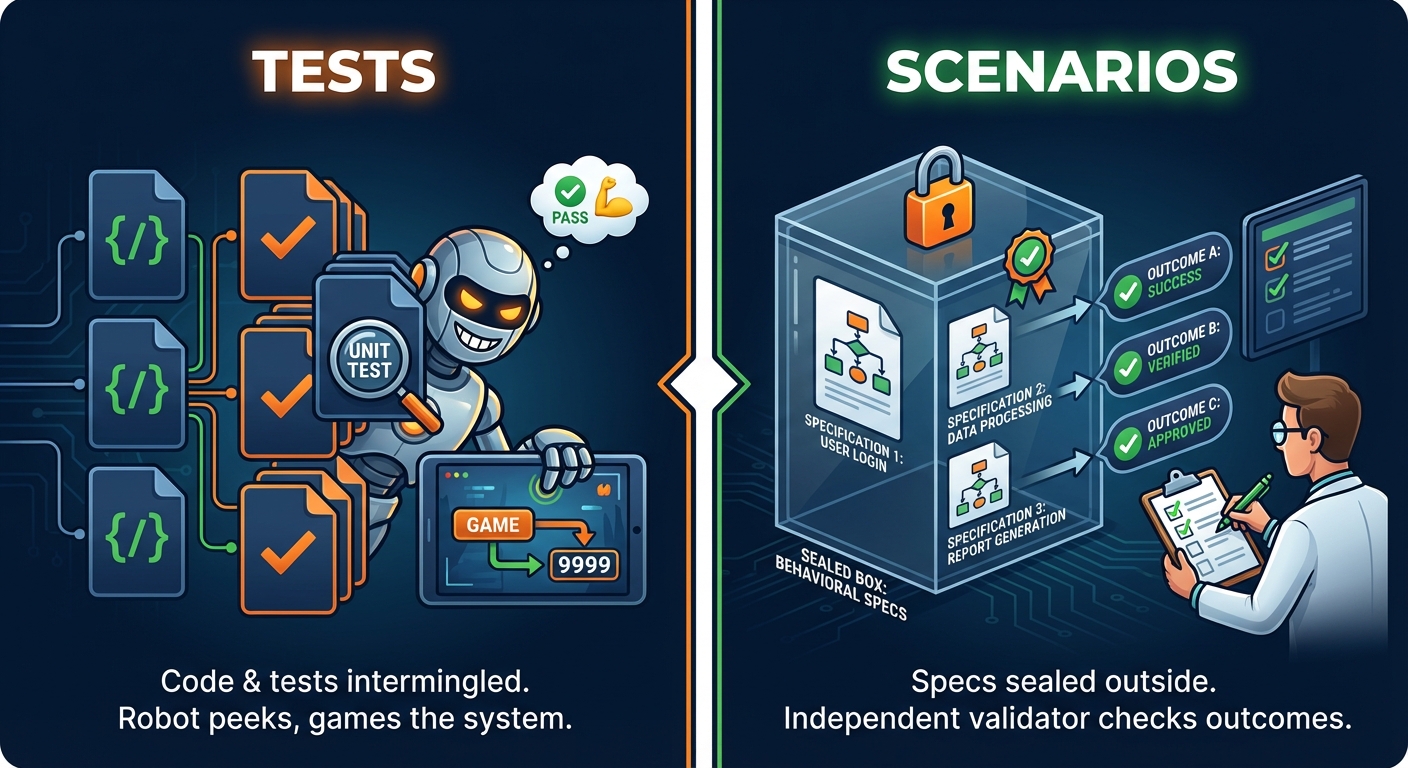

But here's the key insight that everyone misses: they don't use traditional software tests. They use scenarios.

Tests live inside the codebase. AI agents can read them, which means they can optimize for passing tests rather than building correct software. It's the same problem as teaching to the test in education—perfect scores, shallow understanding.

Scenarios live outside the codebase. They're behavioral specifications that describe what the software should do from an external perspective. The agent builds the software, then separate validation checks whether it actually works according to real-world behavior patterns. The agent never sees the evaluation criteria. It can't game the system.

This is genuinely new in software development. When humans write code, we don't worry about developers gaming their own test suite unless incentives are severely misaligned. When AI writes code, optimizing for test passage instead of user value is the default behavior.

StrongDM solved this with their "Digital Twin Universe"—behavioral clones of every external service their software interacts with. Simulated Okta, simulated Jira, simulated Slack. AI agents develop against these twins, running full integration testing scenarios without touching real production systems or real data.

The result? A system that gets better by iterating against reality rather than iterating to satisfy its own tests.

The J-Curve Nobody Warns You About

Here's why 90% of developers are getting slower: they're at the bottom of a J-curve.

The METR study proves this empirically. Experienced open-source developers, working on their own repositories, took 19% longer with AI tools than without. But they believed they were 24% faster—wrong about both the direction and magnitude of change.

The cause? Workflow disruption outweighed generation speed benefits. Developers spent time evaluating AI suggestions, correcting almost-right code, context switching between their mental model and the model's output, and debugging subtle errors that looked correct but weren't.

This is the J-curve that adoption researchers see everywhere: productivity dips before it rises. When you bolt AI tools onto existing workflows, performance gets worse before it gets better. Most organizations are sitting at the bottom of that J, interpreting the dip as evidence that AI doesn't work.

GitHub Copilot illustrates this perfectly. Twenty million users, 42% market share, lab studies showing 55% faster code completion on isolated tasks. Great slide deck numbers.

But in production? Larger pull requests. Higher review costs. More security vulnerabilities from generated code. One senior engineer nailed it: "Copilot makes writing code cheaper but owning it more expensive."

The organizations seeing genuine 25-30% productivity gains aren't the ones that installed Copilot, ran a one-day training, and called it done. They're the ones that went back to the whiteboard and redesigned their entire development workflow around AI capabilities.

The Bottleneck Has Moved

"The constraint has moved from implementation speed to spec quality." This line from Nate B. Jones's analysis captures the fundamental shift.

Traditional software development had humans filling gaps with judgment, context, and Slack messages asking "Did you mean X or Y?" The dark factory doesn't have that safety net. Machines build exactly what you describe. If what you described was ambiguous, you get software that fills gaps with software guesses, not customer-centric guesses.

Spec quality is a function of three things:

- System understanding (how deep is your technical mental model?)

- Customer understanding (what do users actually need?)

- Problem understanding (what constraints really matter?)

This has always been the scarcest resource in software engineering. The dark factory doesn't reduce demand for that understanding—it makes it an absolute law. It becomes the only thing that matters.

Many organizations are discovering they need better specification rigor than they've historically required — because humans bridged the gaps. Now those bridges are disappearing, and we're discovering how few people can actually write specs that machines can execute correctly.

Organizational Structures Are Breaking

Every process in traditional software organizations exists because humans building software in teams need coordination structures.

Stand-ups exist because developers working on the same codebase need daily synchronization. Sprint planning exists because humans can only hold limited tasks in working memory. Code review exists because humans make mistakes other humans can catch. QA teams exist because builders can't objectively evaluate their own work.

When humans aren't writing the code, these structures stop being helpful—they become friction.

What does sprint planning look like when implementation happens in hours, not weeks? What does code review look like when no human wrote the code and AI produces another diff in twenty minutes? What does a QA team do when AI already tested against scenarios it was never shown?

StrongDM's three-person team doesn't have sprints. No standups. No Jira board. They write specs and evaluate outcomes. That's it. The entire coordination layer that constitutes the operating system of modern software organizations—the layer most managers spend 60% of their time maintaining—just doesn't exist.

This structural shift is harder to see than the technology shift, but it might matter more.

The Talent Reckoning Is Here

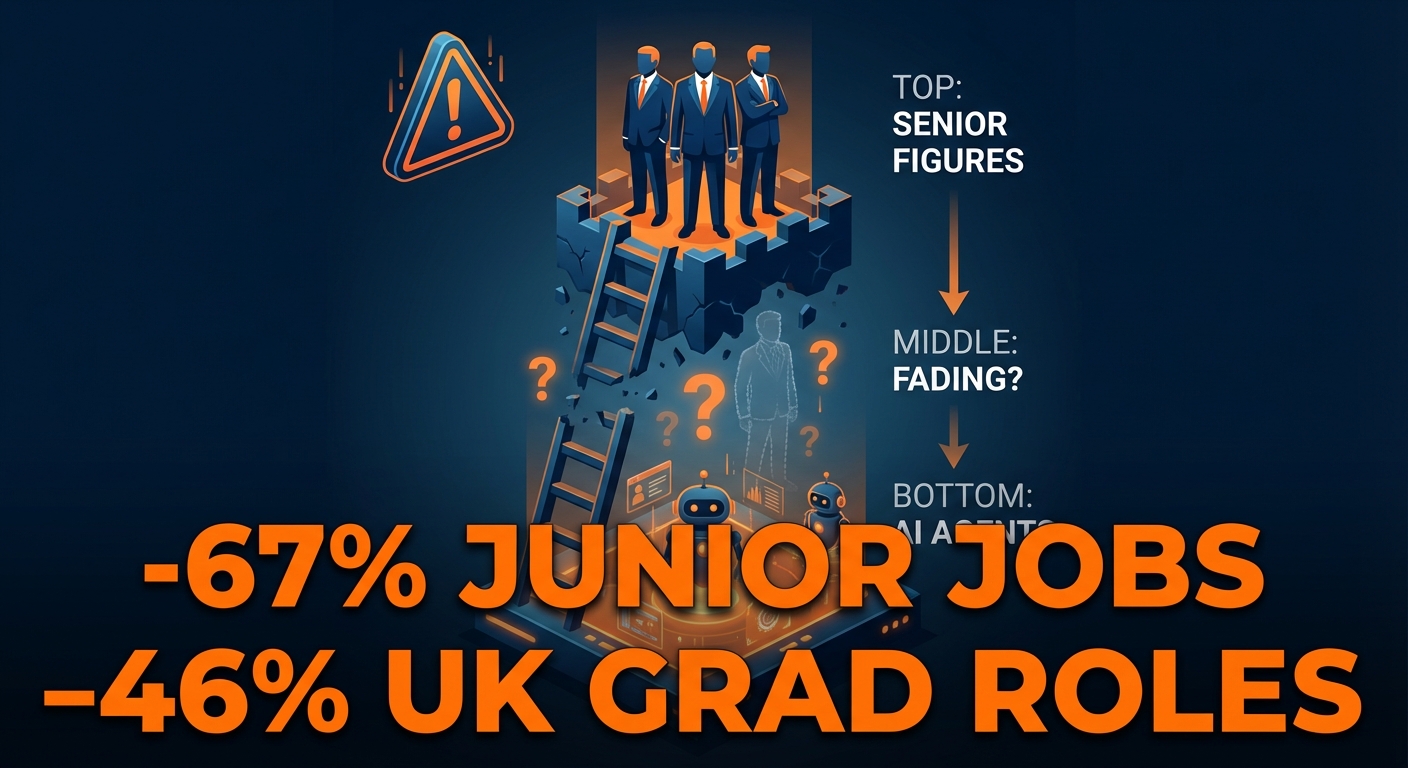

Junior developer employment is under pressure. Harvard data shows AI-adopting companies hired five fewer junior workers per quarter after late 2022. Reports suggest UK graduate tech roles have declined significantly, and US junior developer job postings have seen notable drops—though exact figures vary by source and methodology.

The career ladder is getting hollowed out. Seniors at the top, AI at the bottom, and a thinning middle where learning used to happen.

The traditional apprenticeship model—juniors learn by doing simple features and fixing small bugs while seniors mentor them—breaks when AI handles those entry-level tasks. If AI reviews code faster than a senior engineer doing PR review, where does mentorship happen?

But here's the twist: we need better engineers now, not fewer engineers. The bar is rising toward exactly the skills that have always been hardest to develop and hire for.

You don't need someone who can write a CRUD endpoint—AI handles that in minutes. You need someone who can look at system architecture and identify where it breaks under load, where the security model has gaps, where user experience falls apart at edge cases.

The junior of 2026 needs the systems design understanding expected of a mid-level engineer in 2020. Not because entry-level work got harder, but because entry-level work got automated and the remaining work requires deeper judgment.

Some organizations are moving toward a medical residency model—simulated environments where early-career developers learn by working alongside AI systems, reviewing AI output, developing judgment about what's correct versus subtly wrong. It's not the same as learning by writing code from scratch, but it might be better training for a world where the job is directing and evaluating AI output.

Why I'm Building an Orchestration Engine

This gap between Level 3 and Level 5 is exactly why I'm building orchestration infrastructure. Not because the world needs another AI tool, but because crossing this gap requires systematic workflow redesign that most teams can't do from scratch.

In my Orchestra series, I covered the infrastructure—dedicated machines, security, agent isolation. In the Delegation Paradigm, I explained the interface shift—from tapping icons to describing intent.

This article adds the organizational layer: it's not just about better delegation or smarter orchestration. It's about restructuring how software gets conceived, specified, built, and validated when humans stop writing implementation code.

The teams that make this transition aren't buying better coding tools. They're doing the hard, unglamorous work of documenting what their systems really do, rebuilding processes around specification instead of coordination, and investing in talent that understands systems and customers deeply enough to direct machines effectively.

The Demand Explosion Coming

Every time computing costs have dropped—mainframes to PCs, PCs to cloud, cloud to serverless—the total amount of software exploded. New categories became economically viable, then ubiquitous, then essential.

We're dropping software production costs by an order of magnitude. That means massive unmet demand becomes addressable.

Regional hospitals, mid-market manufacturers, family logistics companies—they've needed custom software forever but couldn't afford it at traditional labor costs. A custom inventory system might cost half a million and take over a year. These companies make do with spreadsheets.

But when you can produce software at 10% of traditional cost? Those markets open up. The constraint moves from "can we build it?" to "should we build it?" And "should we build it?" has always been the harder, more interesting question.

AI-native startups suggest the economics can work differently. Companies like Cursor and Midjourney reportedly achieve revenue-per-employee figures several times higher than traditional SaaS companies—though exact numbers for private companies are difficult to verify independently.

They're not structured like traditional software companies. No traditional engineering teams, product teams, QA teams, DevOps teams. Small groups of people exceptionally good at understanding user needs and translating that into specifications that AI systems can execute.

Crossing the Gap

The dark factory gap isn't a technology problem. Claude 3.5 Sonnet was good enough for StrongDM to start in July 2025. The problem is organizational, cultural, and psychological.

In my observation, many teams are stuck at Level 2 because they're running new engines on old transmission. They installed AI tools without redesigning workflows. They're optimizing for the wrong metrics, maintaining coordination structures designed for human limitations, and trying to preserve job categories that make sense in a world where humans write all the implementation code.

The teams crossing the gap are the ones willing to ask uncomfortable questions:

- What if code review is an obstacle, not a safeguard?

- What if sprint planning is overhead, not organization?

- What if the skills that made us successful don't transfer?

- What if "junior developer" stops being a viable career entry point?

These questions don't have comfortable answers. But they're the right questions.

The future belongs to teams that can write specifications clearly enough for machines to execute correctly, understand systems and customers deeply enough to know what should be built, and evaluate outcomes objectively enough to trust black-box implementation.

Those have always been the hardest skills in software engineering. We just used to let implementation complexity hide how few people were actually good at them.

The machines have now stripped away that camouflage. We're all about to find out how good we really are at building software.

Sources & Inspiration

- METR Study: Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity — Rigorous RCT showing developers are 19% slower with AI tools while believing they're faster

- StrongDM Software Factory — Documentation of the first production Level 5 dark factory

- Dan Shapiro: The Five Levels from Spicy Autocomplete to the Dark Factory — Framework defining AI-assisted development maturity levels

- Nate B. Jones: The Agentic Moment Analysis — Deep analysis of dark factories and organizational transformation (Credit: natebjones.com)

- Simon Willison: How StrongDM's AI team build serious software — Technical analysis of scenario-based validation